What Is Snowflake and Why It Solves Common Cloud Data Warehouse Woes

In the last decade, there has been an explosion in data. Driven by advances in wireless connectivity, compute capacity, and the proliferation of the Internet of Things (IoT) devices, data now dominates significant portions of our lives as consumers.

The same is true for businesses who are becoming increasingly data-driven to improve products, operations, and sales.

Evidently, there are no signs of this trend slowing down: market intelligence firm IDC predicts the volume of data created each year will top 160 ZB by 2025, a tenfold increase over the amount of data created in 2017.

With so much data available, businesses must find a way to make sense of it. However, the rise in popularity of multi-cloud and the spread of this data across both cloud and on-premise poses a significant challenge. Many organizations are now faced with managing a combination of on-premise and cloud data warehouse solutions.

In this blog, we’ll introduce the value and challenges of cloud data warehouses and offer a deep dive into Snowflake, the leading cloud-agnostic data warehouse platform including its benefits and a comparison with other cloud data platforms. We’ll demonstrate how using Snowflake allows organizations to handle vast amounts of data spread across different clouds and on-premises, enabling them to focus on the data analysis and make better decisions based on their data.

The Rise of the Data Warehouse

Before the inception of the data lakes (a central repository that holds a large amount of structured and unstructured data at any scale in its native, raw format), small and large enterprises used data warehouses for data analytics and reporting needs.

What Is a Data Warehouse?

The data warehouse, or enterprise data warehouse (EDW), is an organization's core analytics system that aggregates data from different sources into a single, central, consistent data store to support data analysis, data mining, and artificial intelligence (AI), and machine learning.

It enables organizations to run powerful analytics on vast volumes of historical data to make better-informed business decisions.

Traditionally, a data warehouse is hosted on-premises. Its functionality focuses on extracting data from other sources, cleansing and preparing the data, and loading and maintaining it in a relational database.

It can be hosted on a dedicated appliance (a combination of hardware and software products explicitly designed for analytical processing) or in the cloud. Most data warehouses have added analytics capabilities, data visualization, and presentation tools.

Cloud Data Warehouse Platforms

Choosing a data platform that can handle massive volumes of big data, high speeds, and reliability—not to mention the ease of use—is top of mind for many businesses as they struggle to make sense of all their data.

Many enterprises are already utilizing cloud data platforms or are seriously considering it as part of a long-term strategic initiative to become a cloud-first, data-driven organization.

Snowflake is the most popular solution, supporting multi-cloud infrastructure environments such as Amazon, Microsoft, and GCP. It’s a highly scalable cloud data warehouse “as-a-service” enabling users to focus on analyzing data rather than spending time managing and tuning. .

What Is Snowflake?

Founded in 2012, Snowflake is one of the most popular cloud-agnostic Software-as-a-Service (SaaS) based cloud data warehouses.

It is built on top of the following cloud platforms’ infrastructure and allows storage and compute to scale independently:

- Amazon Web Services

- Microsoft Azure cloud

- Google Cloud Platform (GCP)

As a multi-purpose cloud data warehouse, it can be used for data lakes, operational data stores, data warehouses, and data marts. It enables data storage, processing, and analytic solutions that are faster, easier to use, and far more flexible than traditional offerings. Its automatic up and down scalability and decoupled Compute and Storage architecture help balance performance and operational cost.

Why Choose Snowflake to Handle Your Data?

1. Snowflake’s Unique Architecture Enables Organizations to Focus on Data

Snowflake is a cloud-built data platform built on a hybrid (shared-disk and shared-nothing) database architecture. Its unique design and architecture enables businesses to take advantage of the following:

- Performance: The high volume of data can be loaded and retrieved faster with vertical and horizontal scaling of virtual warehouses.

- Data Sharing: It enables organizations to share the data with data consumers through reader accounts that can be created directly from the user interface.

- Support for Structured and Semi-structured data: Yet another huge benefit is that Snowflake supports both structured and semi-structured data. Data can be loaded directly in its raw format without going to the ETL or ELT process.

- Concurrency: Often organizations run into concurrency issues when many users try to run the queries simultaneously in traditional data warehouse platforms. However, under Snowflake's unique multicluster architecture approach, queries running from one virtual warehouse can never affect other virtual warehouses.

- Billing: Snowflake’s multi-cluster shared data architecture separates the storage resources from compute resources, allowing organizations to pay for the compute resource per second. In contrast, storage is billed by terabyte per month. Because of Snowflake’s unique architecture approach, workloads run in parallel without any contention.

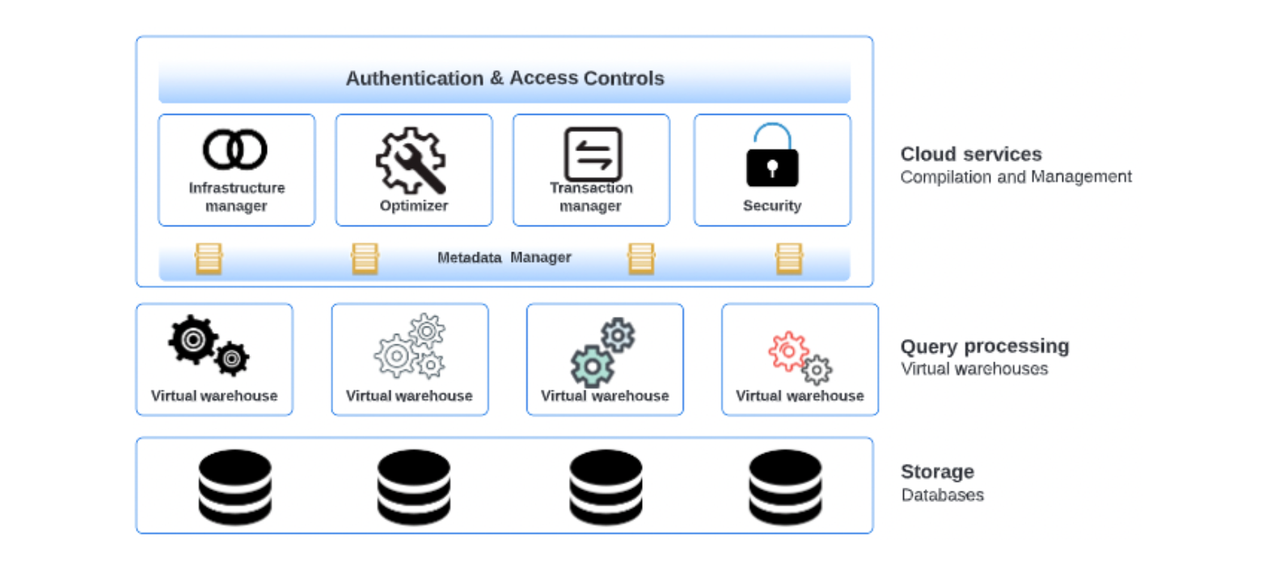

Figure 1 - Snowflake Architecture

Snowflake architecture consists of three layers, illustrated in Figure 1 above:

- Cloud Services

- Query Processing (compute)

- Database Storage

Cloud services:

The Snowflake Cloud Services layer is the system's brain, which coordinates and manages the entire system. These services tie together all of the different components of Snowflake to process user requests, from login to query dispatch. The services layer is fully maintained by a snowflake and runs on compute instances provisioned by Snowflake from the cloud provider.

This layer manages the following services:

- User Authentication and Access Controls

- Infrastructure Management by managing virtual warehouses and storage

- Manages sessions, secures data, and performs query compilation and optimization

- A vital component of the services layer is the Metadata Store of tables and micro partitions, which powers several unique snowflake features, including zero-copy cloning, time travel, and data sharing

Query Processing:

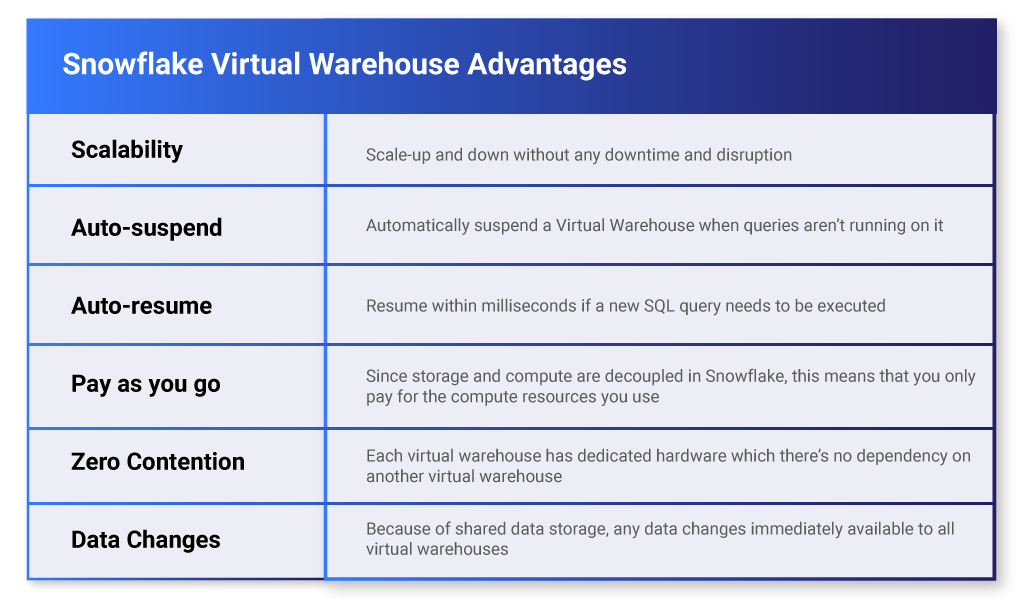

This layer handles query execution using resources provisioned from a cloud provider. With Snowflake, you can create multiple independent MPP compute clusters (called Virtual Warehouse) that do not share compute resources with each other and have no impact on the performance. Table 1 describes some of the key advantages of Virtual Warehouses:

Table 1 - Virtual Warehouse Advantages

Database Storage:

Snowflake uses highly scalable and secure cloud storage to store structured and semi-structured data like JSON, AVRO, and Parquet. The storage layer consists of tables, schemas, and databases. Snowflake reorganizes the data into its internal optimized, compressed, columnar format. Data objects stored in Snowflake can only be accessed via SQL queries through the Compute layer and are hidden from users. The database storage layer consists of multiple encrypted micro partitions which scale automatically.

2. Snowflake Warehouse Scaling Options

Snowflake supports two ways to scale warehouses:

- Scale-Up - Warehouse Resizing: Generally, it helps improve large and complex query performance by resizing the snowflake warehouse.

- Scale-Out - Adding Clusters to a multi-cluster warehouse: Designed specifically for handling queuing and performance issues related to large numbers of concurrent users and queries. Moreover, multi-cluster warehouses can help automate this process if your number of users/queries tend to fluctuate.

3. Snowflake Access Control Framework Offers Unrivaled Data Security

Access control is one of the crucial concepts in all database environments. Access control is a data security privilege that determines who can access and perform operations on specific objects in Snowflake.

- Discretionary Access Control (DAC): Every object in Snowflake has an owner and can grant access to that object.

- Role-based Access Control (RBAC): A mechanism for granting or denying access to objects using roles associated with users.

4. How to Access Securable Objects Using Roles in Snowflake?

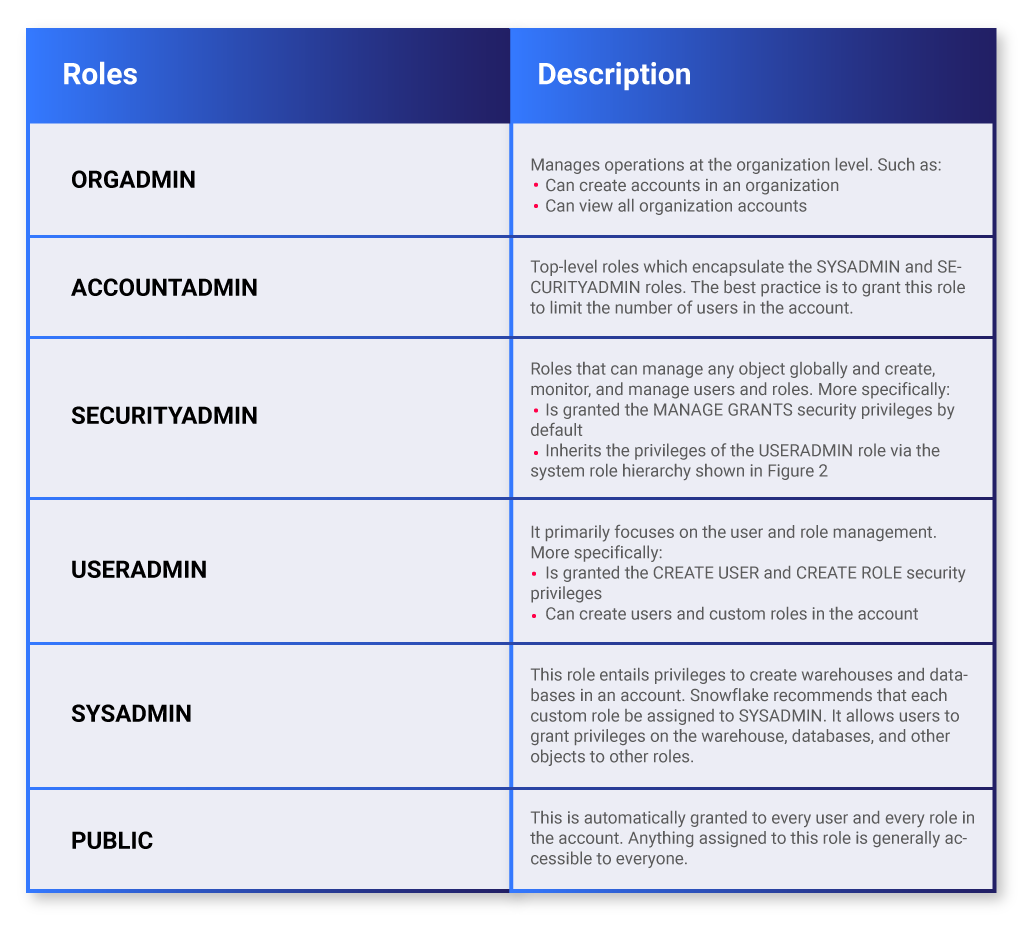

Roles are the entities to which privileges on securable objects can be granted and revoked. Users can possess multiple roles, which allow them to perform actions required for business functions in their organization. This will enable users to switch roles (i.e., choose which role is active in the current Snowflake session) to perform different actions using separate privileges.

Snowflake supports two kinds of roles:

System-Defined Roles:

Table 2 illustrates pre-defined system roles and their description in Snowflake:

Table 2 - System-defined Roles (Source: Snowflake Documentation)

Custom Roles:

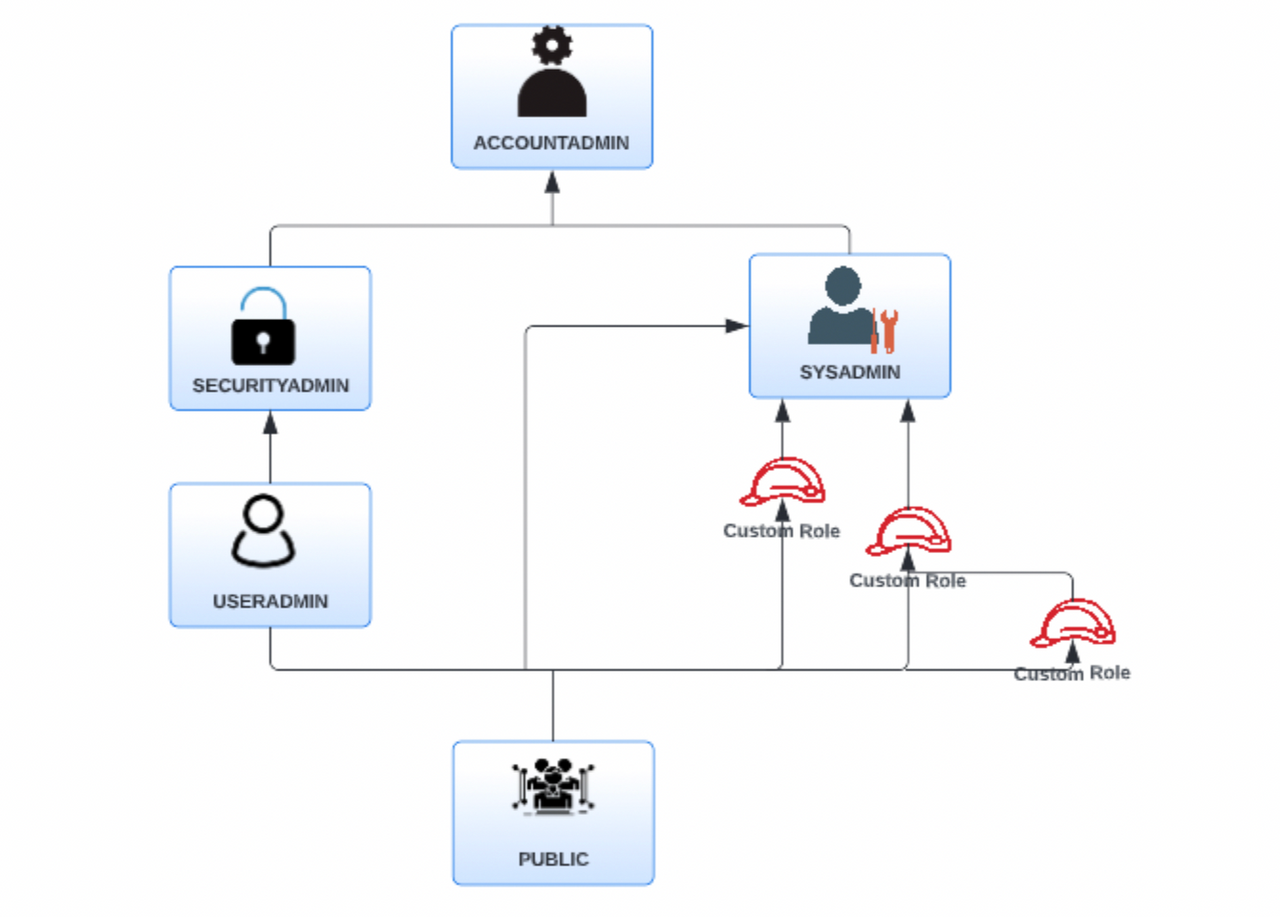

Along with pre-defined system roles, Snowflake provides flexibility to create Custom roles that can also be created by using the USERADMIN role (or higher role) and any role for which the CREATE ROLE privilege has been granted. By default, a newly-created role is not assigned to any user nor granted to any other role. Snowflake recommends creating a hierarchy (shown in Figure 2) of custom roles, with the top-most custom role assigned to the system role SYSADMIN.

This role structure allows system administrators to manage all objects in the account, such as warehouses and database objects while restricting the management of users and roles to the USERADMIN role.

Role Hierarchy

Figure 2 illustrates the hierarchy for the system-defined roles along with the recommended structure for additional, user-defined custom roles:

Figure 2 - Role Hierarchy in Snowflake

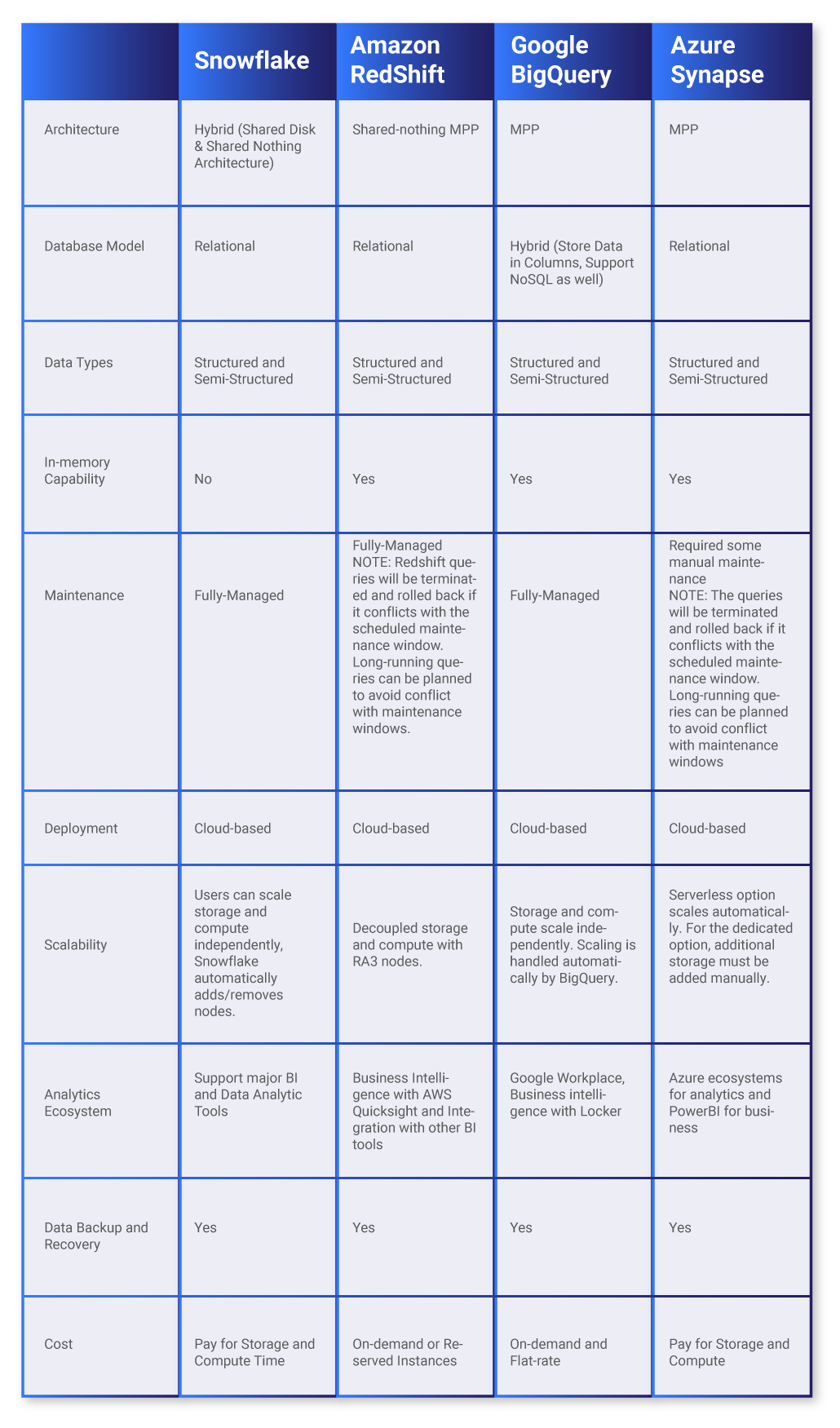

Snowflake Vs Other Cloud Data Warehouses

Enterprises are using leading cloud providers including GCP, Microsoft Azure and AWS, with Snowflake integration, to build modern data warehouse solutions in the cloud.

All offer highly scalable and reliable data warehouse solutions but some differences in technical details and price models are listed in the table below.

You and your business must determine the best-suited cloud data warehouse platform based on your business use cases and needs. If you’re stuck, Contino can partner with your organization to analyze your business needs and recommend and help build the best-suited cloud data warehouse platform.

Table 3 illustrates Snowflake comparison with other major cloud data warehouses:

Table 3 - Comparison of other Major Cloud Data Warehouses

How to Safely Navigate the Observability River: Your Complete Guide to Monitoring & Observability

Everyone is looking for new ways to improve their platforms and applications, but where do you start?

We’ve got to look at the picture as a whole… It’s time to take a trip down the Observability River.

4 Use Cases for Snowflake

Industries like healthcare, financial services, media, and entertainment are investing heavily in Snowflake because of its diverse offerings and benefits listed above.

The following are some common cloud data platform use cases for Snowflake:

1. Data Ingestion

Presently, structured and semi-structured data is streaming into the business from different sources. Because of the influx of data, data ingestion at a reasonable speed for immediate use can be cumbersome. In addition, data sets that require continuous ETL or ELT of the data become challenging.

Snowpipe is Snowflake's continuous data ingestion service, enabling enterprises to load the data from external storage locations such as S3, GCP bucket, and Azure Blob as soon as it's available to stage. Using auto ingest and configuring cloud provider notification enables to load data continuously in tables.

2. Business Intelligence

Data warehousing is a critical part of any business intelligence operation. Using Snowflake, enterprises can implement data warehouses faster, which can be used for ad-hoc analysis by running SQL queries. Snowflake can easily be integrated with business intelligence tools such as QuickSight, Looker, PowerBI, and Tableau.

3. Machine Learning (ML)

Many enterprises use Machine Learning algorithms to make predictions on the data. Feature engineering requires data engineers to build, test, and select appropriate attributes for their ML models.

Once models are deployed, the real challenge is a large volume of clean, new, and historical data to ensure ML model accuracy. Copies of entire data sets must be made to support each experiment which can be done using the zero-copy cloning feature of Snowflake.

Also, Apache Spark can be used and integrated directly with Snowflake to prepare data quickly and build Machine Learning (ML) models. Snowflake supports programming languages such as Python, R, Java, and C++, which can be used for Machine Learning (ML).

4. Data Security and Governance

Data security and governance are inevitable regarding an organization's sensitive data. With a traditional data warehouse, it's challenging and cumbersome to protect the data from external and internal data breaches.

Snowflake supports connectivity with third-party data governance tools such as Immuta, Collibra, Informatica, and many more to protect the data and place data access controls along with policies.

Here at Contino, recently, we leveraged Snowflake to stage one of our most extensive Fintech client's data to implement and enforce access control and data governance policies on data sets using Immuta (A cloud data access control platform providing data engineering and operations teams a centralized platform to control access to analytical data).

We have used Snowflake's predefined ACCOUNTADMIN role to integrate Snowflake with Immuta. Customer roles created in Immuta flow into Snowflake to enforce policies on data sets. Snowflake bulk data ingestion service (Snowpipe) helped load the data within minutes after data files were staged.

Compared to traditional data warehouses, Snowflake helped reduce operational costs while maintaining performance. Data replication between databases and tables was flawless and easy using Snowflake.

In Summary

Working with large amounts of data is a domain that requires investment in technology platforms to gather, organize, and analyze. A robust and well-designed data warehouse platform will ensure data engineers can focus on what they do best, creating new user experiences and platform features to help their customers without spending significant effort building and maintaining data systems.

Further reading:

- Snowflake: Integration with AWS S3

- Snowflake: Integration with Google Cloud Storage

- Snowflake: Integration with Azure