BigQuery OMNI: One Step Closer to the Multi-Cloud Future

Over 80% of public cloud adopters in the enterprise are reportedly working with two or more cloud providers.

The State of the Public Cloud in the Enterprise: Contino Research Report 2020

Why is the public cloud the greatest enabler in a generation?

We asked 250 IT decision-makers at enterprise companies about the state of the public cloud in their organisation.

Multi-cloud is a popular choice for a variety of reasons—whether it’s based on legacy decisions, an intention to avoid vendor lock-in, the reality of having varying levels of expertise across the team, or a combination of these.

However, multi-cloud presents a number of challenges, particularly when it comes to dealing with vast amounts of data spread across different providers.

In this blog, we’ll introduce BigQuery OMNI as a tool to improve your experience with multi-cloud. We’ll cover its benefits, how it works and some potential drawbacks.This information can be used to improve your data warehousing approach and reduce time-to-insights for your business.

Multi-Cloud and the Data Challenge

The reality for many companies is that data is stored across many different clouds, and huge efforts must be made to run analytics using all of this data and to make sense of it.

Bringing all data in a single cloud (as good as it may sound) is associated with extreme efforts and operational costs for migration of data and re-training of analysts. Also, think about the opportunity cost involved in moving data, when you need to wait until migration completes before performing analysis on such data.

Overall, it very much looks that multi-cloud is here to stay, and organisations are looking for a solution to the above data challenge…

…this is where BigQuery OMNI comes into play.

What Is BigQuery OMNI?

BigQuery OMNI is the multi-cloud engine introduced by Google in mid-2020 that became available to the public at the end of 2021.

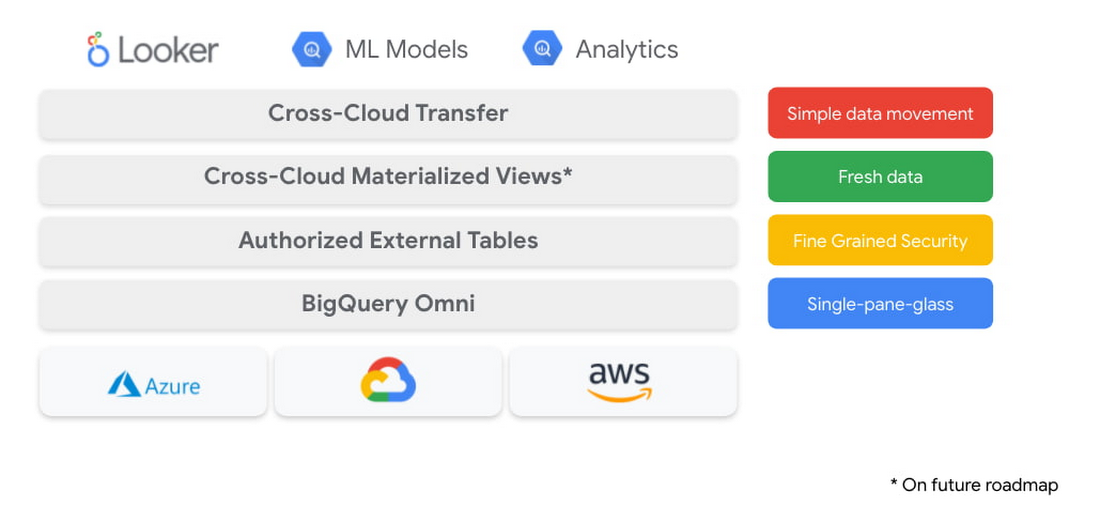

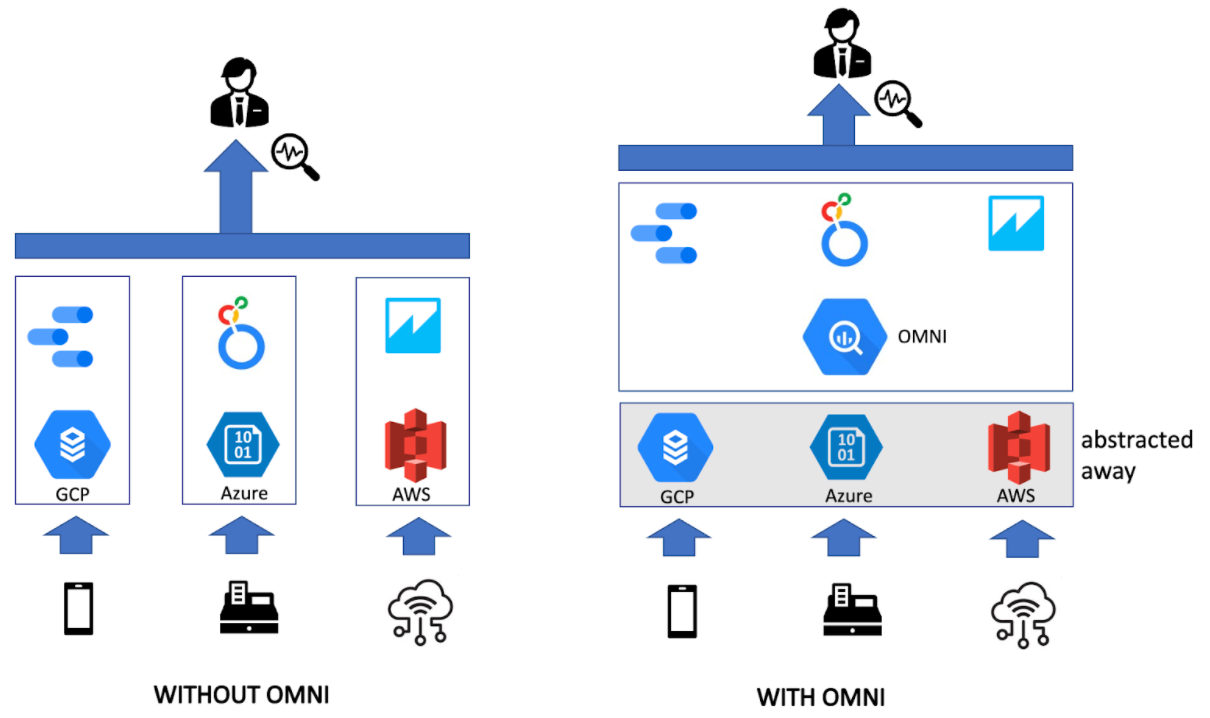

BigQuery OMNI is a “single pane of glass” for multiple clouds that can take advantage of the best-in-class analytics and machine learning engines that BigQuery offers.

It enables users to set up the connection quickly and abstract away from where the data is actually stored. Consider the location of data as an implementation detail that analysts don’t need to worry about.

What Makes BigQuery OMNI Stand Out

Why We Love BigQuery

BigQuery is one of the industry's favourites for data warehousing, and there are good reasons for it.

It’s fully managed, serverless, relatively cheap, responsive, and scalable to petabytes of data (most notably, compute and storage scale independently and on-demand). Not to mention its analytics and machine learning power (think about BQML, easy integration with Data Studio, connectors to Looker, Analytics360, and plenty of other Google services).

What OMNI Does And How It Works

Previously, BigQuery could query external data sources, but only if they were stored in the Google environment (eg. Cloud SQL, Bigtable, Spanner, Google Drive, Google Cloud Storage). If you wanted to use BigQuery against anything outside Google, you’d have to move data to GCP first.

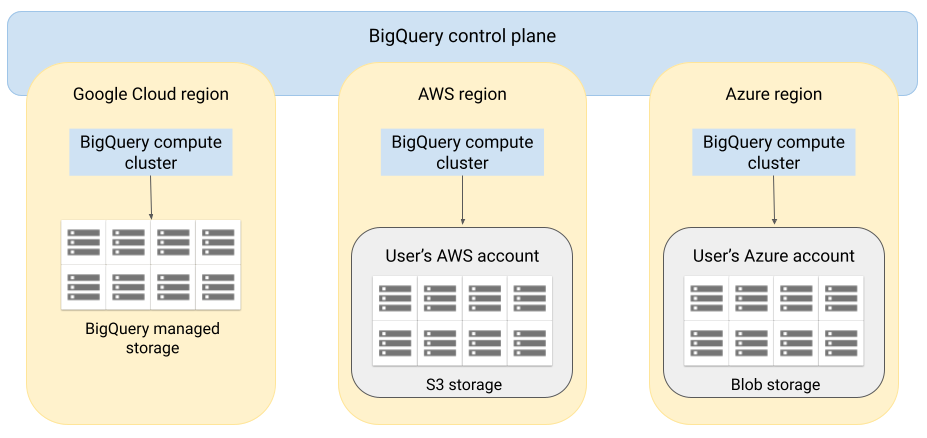

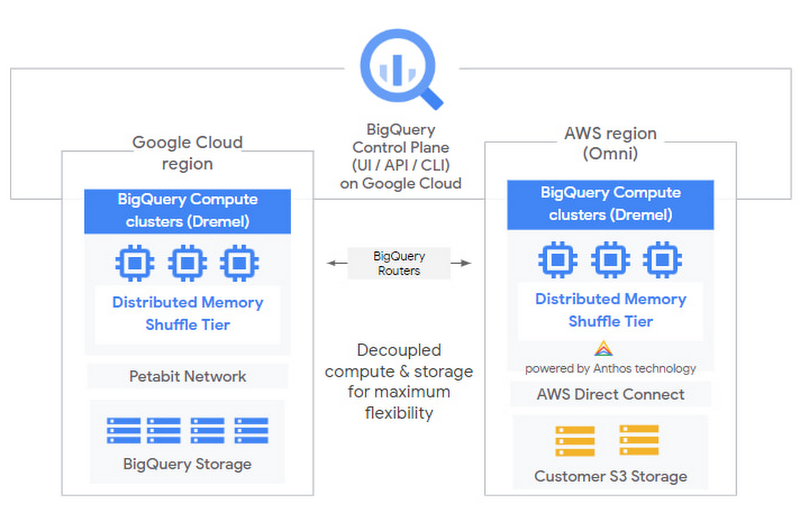

With BigQuery OMNI, you can start querying external clouds (AWS and Azure). Now you don’t need to copy the data to GCP, as all computations are done on the local clusters where the data resides.

Users can enjoy the familiar BigQuery user interface with its robust analytics and ML engine, flexible governance and compliance with local regulations about data locality! You can benefit from fine-grained security settings (row-level and/or column-level) managed from inside OMNI.

You may choose to save the query results in the local cloud (in which case no data movement whatsoever happens between the clouds), or bring query results to the Google Cloud console and save them to the BigQuery native storage if needed.

An important point is that the raw (unprocessed) data is left where it is, so there are no associated data transfer costs. You may want to check out the demo from Google Cloud Next’21.

If you are wondering what is happening under the hood, compute clusters (Dremel) are run directly in AWS/Azure, while orchestration, deployment and security are managed by Anthos.

Limitations of BigQuery OMNI

There are certain limitations you need to be aware of:

- So far OMNI only applies to limited regions in AWS/Azure (of which only AWS is available to the public)

- Running queries requires flat-rate pricing, so you won’t get away with on-demand pricing (or free quotas!), as Google Cloud needs to reserve slots in each of the external sources you would like to query. So the pricing is relatively high, as you are paying for both services - BigQuery Engine and Anthos,

- Can only load data from structured files (CSV, JSON, AVRO, Parquet) in the buckets,

- The query performance would be lower compared to the case where it would be stored locally

- Cannot JOIN data residing in different locations in a single SQL query (eg. when one piece is located in native BigQuery storage and the other - in the external cloud). For now, if you need to run JOIN statements on both pieces of data, the workaround is to save OMNI query results to a new or existing table in the native BigQuery dataset before joining it with other data

- BQML statements are not supported

- Query results are not cached

You can see the full list of limitations on the official website.

Below we will describe the use case for BigQuery OMNI and the steps required to set it up.

Who Will Benefit the Most From OMNI?

Example Use Case

Let’s say your company keeps data in multiple clouds, and performs separate analytics on each piece of data:

- Mobile data is stored in Google Cloud relational database, and further analysed in Data Studio

- Transaction data is stored in Azure data lake and then after a series of transformations analysed in Looker

- IoT data is streamed to Amazon data lake (S3) and then visualised in Amazon QuickSight

Imagine what a nightmare it would be to make sense of all of this data, ensure correct governance and hire analysts having expertise in each of the cloud infrastructures and services—plus you’d need to bring all of the data together to have combined analytics.

On top of that, imagine that you want to develop a machine learning model that needs to take into account all of this data. Your analysts don’t have enough programming knowledge and would like to use familiar SQL syntax to build machine learning models using BigQuery out-of-the-box solution.

Before BigQuery OMNI, there was no way to meet all of the above requirements in a simple and elegant way.

Now, just by setting up a connection between BigQuery OMNI and the other cloud providers (that literally takes five minutes), you can start running queries from inside BigQuery straight away using standard SQL syntax.

Your analysts can now connect all of your BI tools to BigQuery only (consider it as a control pane), enjoy consistent experience with BigQuery UI and abstract away from where the data is actually stored—just leave it for the IT to set up the proper connection. If analysts decide to save the query results to BigQuery, they can even start experimenting with machine learning based on all of this data (thanks BQML!) in a couple of simple clicks.

How to Set Up OMNI Connection to S3

OMNI documentation on the Google Cloud website contains detailed steps for setting up the connection; I won’t repeat them here.

The general idea is that BigQuery acts as a service account when accessing S3 data, so the permissions are governed by flexible AWS policies and the role level. Permissions can be limited to read-only, but can also allow writing the query results to S3 if that’s what you need.

Note that the dataset in BigQuery storing so-called “external tables” linked to S3 will be separated from all other datasets as it’s located in a different region (currently, only aws-us-east-1 is available). You will have to pick that region manually, so don’t confuse it with GCP’s own us-east-1 (quite unintuitively, they are located in the opposite parts of the dropdown list).

BigQuery OMNI Pricing

Once the connection is up and running, there is just one last thing… pricing!

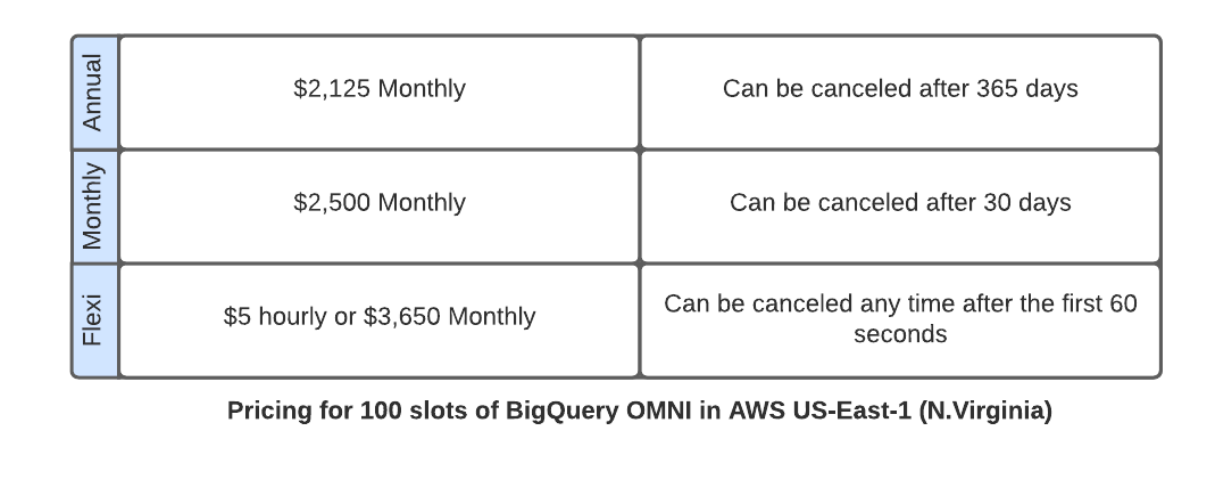

Unfortunately, at the moment BigQuery OMNI only supports flat-rate pricing. So, you won’t get away with on-demand pricing or free tier. In fact, you need to buy a slot commitment. Depending on your usage pattern, you might consider buying annual, monthly or Flexi commitment (as usual, you pay extra for the flexibility of cancelation policy).

The monthly price varies from $2,125 for annual to $3,650 for Flexi, the latter is charged per second and can be canceled at any time.

As with any other BigQuery slots, purchasing an OMNI commitment provides dedicated BigQuery OMNI slot capacity at a given location. Note that the slots should be specifically for Amazon S3 East, they should be allocated to the reservation (which is a way to split up slots across workloads) and, if needed, assigned to the specific project.

Now you’re ready to go!

Conclusion

In the era of multi-cloud that we are observing now, Google’s bet on BigQuery OMNI is paying off. Likely we’ll see more Google-managed services being brought to multi-cloud environments in the future making it a new norm.

BigQuery OMNI simplifies life for a lot of analysts who have to deal with data stored across different clouds. Now they can use the familiar BigQuery UI and don’t need any coding knowledge to bring data together for the combined analytics.

Computations are done locally, data remains where it is, and only the query results (if needed) are passed to GCP. Apart from the query results, there’s no cross-cloud data movement of raw data, which makes governance and security concerns less pronounced. However, note the pricing and region limitations, if that’s an important factor for you.

Whether you are already experiencing the multi-cloud challenges discussed above, or you’re just a curious cloud practitioner, you should start exploring OMNI to have a better understanding of “the art of possible”. BigQuery OMNI can take the heat off data analytics, data engineering and data science team, and reduce time-to-insights. Start evaluating your data structure and enjoy the uncovered strength of your analytic capabilities!

If you’d like to discuss where you are on your multi cloud journey, and how Contino could support you as a Google Cloud Premier Partner, get in touch!