How Can Enterprises Scale Agile Frameworks Without Suffocating Teams with Processes?

This blog was co-written by Contino consultants Hibri Marzook and Ryan Lockard.

The study of work is intriguing. Entomologists spend entire careers studying the work habits of insects, biologists study the patterns of microbes and organisms, and, throughout history, humans have tried to study and control the way our own work is structured. The intent behind the study of human work behavior is typically linked to value optimization and waste reduction. In the 19th century, for example, this work was codified by Frederick Taylor in the industrial manufacturing plants in America during the industrial revolution. His “scientific” studies led to decades of management practices all centred around the benefits of highly controlled and repeatable working practices.

In the context of an assembly line, Taylorian Scientific Management had some value. The gears, bearings, thread counts and so on were predictable and could be controlled, managed and optimized. Taylor’s approach spread across factories globally. It would only seem natural to evolve this proven approach to the next industrial boom: the technological revolution.

In the 1950s and 1960s, programming emerged as a trade and quickly the factory mindset shifted to a new domain. Ironically, it was a paper written by Winston Royce on why Waterfall (a project management technique characterized by upfront planning of linear, sequential tasks) is a flawed model for software development that ignited the waterfall wave of engineering that is still in place in enterprises today.

Within Waterfall, the perceived safety of a well-curated plan gives people a sense of confidence in the unknowns of software development. But some quickly noticed that, in spite of the desire to improve efficiency and remove waste, this operating model was shot through with inefficiency and high degrees of waste. More time and effort is spent planning the work than actually executing the work. And, what’s worse, more than 50% of the time the plans were wrong!

Changes Start Emerging

Around the beginning of the 1990s, some amazing changes in working practices started to emerge. Object oriented languages like C++, Java and Pascal started to take hold, and new methods emerged from these patterns that embraced this new wave of creating software, such as Rapid Application Development (RAD) and Object Oriented Programming (OOP). As these models matured, a new framework emerged from the Rational Software Corporation called the Rational Unified Process (RUP). Its intent was said to be “...not a single concrete prescriptive process, but rather an adaptable process framework, intended to be tailored by the development organizations and software project teams that will select the elements of the process that are appropriate for their needs.” It had minds like Philippe Kruchten, Dean Leffingwell, Scott Ambler and Dave West looking after its creation and maturation.

RUP quickly became the operating model for a lot of technology companies and reclaimed the psychological wins seen in waterfall by instilling a sense of delivery control, because of the heavy process approach RUP prescribed. While RUP tried to position itself as an open framework, in practice it was used as an implementation guide and began to hamstring disruption and innovation as badly or worse than waterfall ever did.

If not for the RUP, it is unlikely the 17 angry men that authored the Agile Manifesto in 2001 ever would have met. From the works of these authors, including Martin Fowler, Kent Beck, Ron Jeffries and Bob Martin, came Agile: a new, lightweight way to approach software engineering. It is a model that embraces collaboration and puts a stern focus back on actually creating healthy, resilient, software, rather than on planning and control!

Following the work of the Agile Manifesto group, new lighter frameworks and practices emerged, like Scrum, and various lean-derived models like Kanban and Lean Startup. Agility enablers like DevOps and cloud infrastructure emerged shortly afterwards that synergized with these frameworks.

Agile in the Enterprise

Over the last decade, I’ve looked at these high-velocity, output-focused delivery models and wondered: “how this could work in the enterprise?”. These smaller, nimble, empowered teams work great in small organizations, but surely we need more rigor and governance for large enterprise organizations?

Where opportunity lives, solutions thrive. Some of the leads from RUP looked at these concerns and brought forth new “agile” models for the enterprise. Scott Ambler introduced Disciplined Agile Delivery (DAD), Dave West joined Scrum.Org and released the Nexus Scaling Method, and Dean Leffingwell is most known for his work with the Scaled Agile for the Enterprise (SAFe) framework.

Each of these models is targeted to solve the gap in agile frameworks for large regulated organizations, but each has their own limitations, which we explore below.

Why Implement Agile Frameworks in the Enterprise?

Why do enterprises turn to agile frameworks? The most common justification we hear for scaling agile is to manage dependencies between teams.

Teams typically organize themselves around existing organizational structures or technical specializations. Work that is then is farmed out to existing teams creates more intricate interdependencies.

To explain why this happens, we need to take a look at something called Conway’s Law, which stipulates that “any organization that designs a system will produce a design whose structure is a copy of the organization's communication structure”.

Therefore, a way of managing the dependencies between each existing team is needed, because the value stream for a deliverable spans multiple teams. Heavy upfront planning and detailed design is introduced to co-ordinate the work between teams. The technical design reflects the requirement for this coordination instead of having just enough design to deliver and get feedback (i.e. Agile). Heavy upfront design and planning is an Agile anti-pattern.

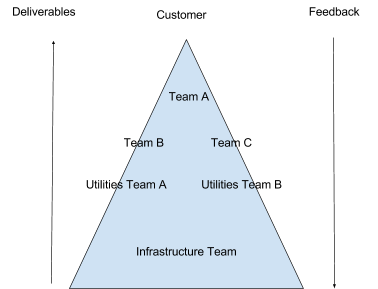

For example, say an organization embarks on a “digital” transformation programme/program. To deliver the transformation Product A, Product B and Product C need to be delivered. A team for each product is spun up: Team A, Team B and Team C. All three teams will have a dependency on the infrastructure needed to develop and run these products. So, in turn, an infrastructure components team is spun up: the DevOps team.

Whilst the three products are in the early inception phase, architects decide that some common functionality might be shared between all three products. The organization now spins up a new team, the Common Utilities Team, sometimes called the Core Components Team. This team also has a dependency on infrastructure.

Now Team A, Team B and Team C, have dependencies on the deliverables of the Common Utilities Team and the DevOps team.

In addition to creating lots of upfront design and planning work, this situation slows down a key characteristic of Agile: feedback.

Feedback loops underpin Agile ways of working. Fast feedback is needed to fail fast. Fast feedback is needed to know if we are building the right thing. Long or non-existent feedback loops are also an Agile anti-pattern.

But the scattering of the value stream across diverse teams creates an “ecological pyramid” of feedback. Teams at the apex of the pyramid are able to get quick feedback. Teams at the bottom of the pyramid get delayed or diluted feedback on how their deliverables are used. Applying an “Agile Scaling” framework amplifies Agile anti-patterns, which drown out the benefits that Agile teams have attained.

It’s to solve these problems that enterprises decide they need to find a way of scaling Agile effectively in their organization. Here are some ways that we have found to be effective.

Scaling Agile in the Enterprise

So how does an organization reap the benefits of Agile ways of working, at an organizational scale? How do we sustain mature Agile teams and not suffocate them with process heavy frameworks?

Scaling in a complex adaptive system is a strange beast. What works for two teams, might not work for three teams. What works for four teams might not work for two teams or seven teams. And what about the 100-500 team enterprise? The scaling factor can amplify or attenuate existing dysfunctions within your organization. You have to be finely attuned to how the organization and people react.

First, accept that whatever method that is chosen, there will be failure. The key is to fail fast. Failure is the single most effective means of enabling organizational learning. You are on a continuous, iterative journey to find out what combination of tools from the Agile toolbox works for your unique organizational context.

Try something for two teams and, if it works, experiment to see if it works across three teams. If it doesn’t, listen to the feedback and try something else. Rinse and repeat until you find a way of working that works for your organization. To do this, you’ll need an organization-wide willingness to accept uncertainty in small doses.

Interestingly, some of the largest and successful organizations like Google, Amazon and Spotify do not subscribe to these prescriptive scaling models. Spotify has actually open sourced their operating model for others to see, but explains that it is not a lift-and-shift framework, it is just what works for them. What these organizations rely on more than project management type operating models is highly resilient architecture, micro-service based systems, and rapid releases with tightly integrated feedback loops and highly market-focused (and technically-savvy) business people. This intentional focus on high release frequency and automated delivery pipelines is an expressed intent to manage dependencies and to highlight obvious flaws in the build chain. Relying on people to build elements of value (software) and not to build waste (copious documentation and meetings) allows for truly technical organizations to metaphorically and literally overtake those enterprises hamstrung with process.

Overcoming Process: the Momentum Model

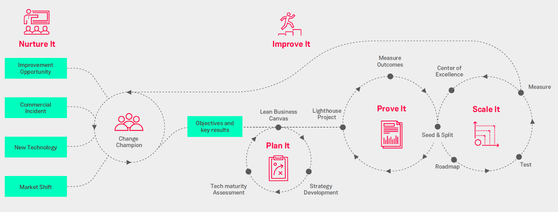

Beyond theory lives a simple model that allows for enterprise level agility based on the simple principles of agility and DevOps, but that also embraces fluidity. Based on the Contino Momentum model is the scaling method below. It follows a simple process of:

Nurture It > Plan It > Prove It > Scale It > Improve it

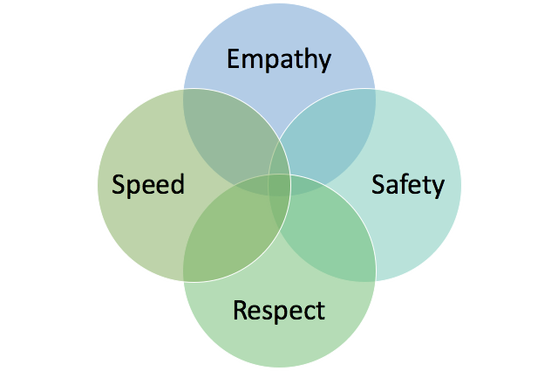

Focused on a core set of simple values, this is an open model that enables enterprises to bypass heavy process, wasteful project management-laden cycles and expensive certifications. It shifts the focus from acronyms, delivery metaphors and reporting layers to an outcome-based pipeline of delivery that speaks to the core of enterprise DevOps and business-focused software delivery. To realize meaningful operational efficiencies, the Momentum scaling model relies on teams and those involved in the value chain to embrace the simple values below:

- Empathy: knowledge work is significantly different than the work studied by Frederick Taylor in the industrial revolution. It requires deep contextual thinking, social collaboration and a hard-won respect for the craft of programming and quality. Often, those in traditional management and project management roles never took pause to appreciate the journey of a modern knowledge worker and a shift to an empathetic stance with this group is critical.

- Safety: real learning comes through failure. Embracing a culture where failure is not met with condemnation is difficult, especially in established, highly-regulated industries where ego and throughput are the accepted norm.

- Speed: when teams improve and scale, a new level of learning loops, data collection and enterprise enablement will be realized. The organization has to ensure all members of the value stream are empowered and ready to leverage this learning. Having product decision makers talk to intermediaries to talk to delivery teams will no longer be acceptable. Choke points can cripple the rapid acceleration phase of Enterprise DevOps and must be exposed and eliminated.

- Respect: for teams to identify and address failure, there has to be mutual respect. In engineering, we say the shift to cloud is to stop treating your servers like pets and treat them like cattle. You love your pets, you manage your cattle. The same is true of ideas and experiments. Treat them as falsifiable hypotheses. When an improvement cycle comes it should not be met with blind rejection, but rather with the respectful acceptance that there may be a better option.

Below is our model for scaling Agile in the enterprise. As you can see, this is a simple approach for managing complex growth in a complex environment. Avoiding the complex and leveraging the simple is the fastest means to produce real business outcomes, while avoiding the complexity of other frameworks.

What follows are some key tips to bear in mind when scaling Agile in the enterprise:

Limit Work in Progress (WIP) and reduce batch sizes

Help teams identify constraints within their delivery flow. Work in progress (WIP) limits and smaller batch sizes help to discover these constraints. When teams are able to deliver or “flow” smaller pieces of work through their pipelines, the reduce the time a dependent team has to wait. Small batch sizes along with quick cycle times, allow a team to be disrupted to take on a request a dependent team has. Measure, share and be aware of cycle and lead times for all teams.

Build teams around goals driven by a unified vision

In 2008, Chris Shaffer tried something chaotic and crazy at Microsoft. Instead of restructuring and reorganizing his teams, Chris proposed to let people decide what they wanted to work on. They wrote a list of business priorities and investment areas on a whiteboard and gave every body a sticky note to write their name on. En masse, everyone would go to the whiteboard and put their name against what they wanted to work on.

They self-organized around clear goals with a unified vision. They stepped out of their own team boundaries to create new teams focused on delivering. Microsoft has repeated this exercise about three times since then.

Organizing, or even self-organizing around goals allows teams to break the communication structure imposed on them, to create a communication structure that is better suited to the goal the organization needs to achieve. Conway’s Law implies that to build the system needed, an organization has to alter the communication structure. By organizing around goals, we can remove inter-team dependencies and we are left with teams who can deliver without waiting for a deliverable from another team.

Do you need to build everything?

A unified vision will allow your organization to figure out what it should focus on. And, crucially, what is should not. Based on a good understanding of the technology landscape, where does the value for your organization lie? Is there more value in building private cloud infrastructure, or focusing on delivering the software that actually brings in money to the company?

By using a public cloud-native architecture and not building your own data centre (unless that is your organization’s core competency) you remove the dependency development teams have on the infrastructure team. Delivery teams can build their own infrastructure with a dependency only on a solvent credit card.

Are you building your own in-house CMS, or data access library? Give your teams the freedom to pick one off the shelf and move on to building the technology that matters.

Conclusion

When selecting a plan and/or a framework to help realize a plan, organizations should ask themselves: “will this approach make positive change towards my intended outcomes?”. Look towards nimble practices that serve accelerated time-to-market, embrace experimentation and scientific method, and foster the best practices mentioned above in this post.

If your transformation approach does not significantly improve your value stream and operating model, have you really positively transformed anything?