9 Killer Use Cases for AWS Lambda

AWS Lambda is an event-driven serverless computing platform.

This means that it runs code in response to events (“event-driven”), while automatically taking care of all the back-end infrastructure and admin that is needed to run your code (“serverless”).

All the developer needs to focus on is their code. The rest is taken care of "under the hood" by AWS.

Since its launch in 2014, Lambda has soared in popularity, becoming one of the most widely adopted and fastest-growing products for AWS.

Lambda can be used in a number of different scenarios, it is easy to get started with, and can run with minimal operating costs. One of the reasons behind its popularity is the flexibility it comes with - it is the “swiss army knife” of the AWS platform for developers and cloud architects.

In this post, I will cover how Lambda works, what makes it different and explore 9 killer AWS Lambda use cases.

How Does AWS Lambda Work?

When you send your code to Lambda, you are actually deploying it in a container. The container, however, is itself created, deployed, and managed entirely by AWS. You do not need to be involved in the creation or management of the container, and in fact, you do not have access to any of the infrastructure resources that might allow you to take control of it. The container is simply a location for your code.

What Makes AWS Lambda Different?

Lambda performs all the operational and administrative activities on your behalf, including capacity provisioning, monitoring fleet health, applying security patches to the underlying compute resources, deploying your code, running a web service front end, and monitoring and logging your code.

Some could say this is so far no different from any other Platform as a Service (PaaS) offering, but there are some key differentiators comparing to traditional PaaS.

1. Pay-as-you-go for great cost savings

Just like other public cloud services, Lambda has a pay-as-you-go pricing model with a generous free tier, and it is one of the most appealing for cost savings. Lambda billing is based on used memory, the number of requests and execution duration rounded up to the nearest 100 milliseconds. This is a huge leap for fine-grained billing in order not to pay for spare compute resources compared to the second based billing of EC2.

Check out our other article on how to save up to 10% on AWS by automatically managing instances with Lambda.

2. Completely event-driven

While most of the PaaS offerings are designed to be running 24/7, Lambda is completely event-driven; it will only run when invoked.

This is perfect for application services which have quiet periods followed by peaks in traffic.

3. Fully scalable

When it comes to scalability, Lambda can instantly scale up to a large number of parallel executions, controlled by the number of concurrent executions requested. Scaling down is handled by automatically; when the Lambda function execution finishes, all the resources associated with it are automatically destroyed.

4. Supports multiple languages and frameworks

Lambda comes with native support for a number of programming languages: Java, Go, PowerShell, Node.js, C#, Python, and Ruby code, and provides a Runtime API which allows you to use any additional programming languages to author your functions. There are additional open-source frameworks for rapid development and deployments, like Serverless, Apex and Sparta to name a few.

9 Killer AWS Lambda Use Cases

Let’s take a look at AWS Lambda in action with the following nine use cases.

- Operating serverless websites

- Rapid document conversion

- Predictive page rendering

- Working with external services

- Log analysis on the fly

- Automated backups and everyday tasks

- Processing uploaded S3 objects

- Backend cleaning

- Bulk real-time data processing

1. Operating serverless websites

This is one of the killer use cases to take advantage of the pricing model of Lambda and S3 hosted static websites.

Consider hosting the web frontend on S3 and accelerating content delivery with Cloudfront caching.

The web frontend can send requests to Lambda functions via API Gateway HTTPS endpoints. Lambda can handle the application logic and persist data to a fully-managed database service (RDS for relational, or DynamoDB for a non-relational database).

You can host your Lambda functions and databases within a VPC to isolate them from other networks. As for Lambda, API Gateway and S3, you pay only after the traffic incurred, the only fixed cost will be running the database service.

2. Rapid document conversion

If you are providing documents (for example, specifications, manuals, or transaction records) to your users, they may not always want them in one standard format. While many users may be happy with an HTML page, others may want to download a PDF or may need the document in a more specialised format.

You could, of course, store copies of each document in all formats that are likely to be requested. But storing static documents can take up a considerable amount of space, and it is not practical if the content of the documents change frequently, or in response to user inputs. It is often much easier to generate the documents on the fly. This is exactly the kind of task that an AWS Lambda application can handle rapidly and easily—retrieving the required content, formatting and converting it, and serving it either for display in a webpage or for download.

3. Predictive page rendering

You can use AWS Lambda to do more than just clean up data, however. If you are using predictive page rendering to prepare webpages for display, based on the likelihood that the user will select them, AWS Lambda can play a major supporting role.

You can, for example, use a Lambda-based application to retrieve documents and multimedia files, which may be used by the next page requested, and to perform the initial stages of rendering them for display. If multimedia files are being served by an external source, such as YouTube, the Lambda application can check for their availability, and attempt to use alternate sources if they are not available.

4. Working with external services

If your website or application needs to request services from an external provider, there's generally no reason why the code for the site or the main application needs to handle the details of the request and the response. In fact, waiting for a response from an external source is one of the main causes of slowdowns in web-based services.

If you hand requests for such things as credit authorization or inventory checks to an application running on AWS Lambda, your main program can continue with other elements of the transaction while it waits for a response from the Lambda function. This means that in many cases, a slow response from the provider will be hidden from your customers, since they will see the transaction proceeding, with the required data arriving and being processed before it closes.

5. Log analysis on the fly

You can easily build a Lambda function to check log files from Cloudtrail or Cloudwatch.

Lambda can search in the logs looking for specific events or log entries as they occur and send out notifications via SNS. You can also easily implement custom notification hooks to Slack, Zendesk, or other systems by calling their API endpoint within Lambda.

6. Automated backups and everyday tasks

Scheduled Lambda events are great for housekeeping within AWS accounts. Creating backups, checking for idle resources, generating reports and other tasks which frequently occur can be implemented in no time using the boto3 Python libraries and hosted in AWS Lambda.

7. Processing uploaded S3 objects

By using S3 object event notifications, you can immediately start processing your files by Lambda, once they land in S3 buckets. Image thumbnail generation with AWS Lambda is a great example for this use case, the solution will be cost-effective and you don’t need to worry about scaling up - Lambda will handle any load!

8. Backend cleaning

One of the top priorities for any consumer-oriented website is a rapid response time. A slow response, or even a visible delay in responding, can translate into a significant loss of traffic. If your site is too busy taking care of background tasks to quickly display the next page or to show search results, consumers will simply switch to another site that promises to deliver the same kind of information or services. You may not be able to do much about some sources of delay, such as slow ISPs, but there are things that you can do to speed up response time on your end.

Where does AWS Lambda come in? Backend tasks should not be a source of delay in responding to frontend requests. If you need to parse user input in order to store it to a database, or if there are other input-processing tasks which are not necessary for rendering the next page, you can send the data to an AWS Lambda process, which can in turn not only clean up that data but also send it on to the database or application that will use it.

9. Bulk real-time data processing

It isn't unusual for an application, or even a website, to handle a certain amount of real-time data. Data can stream in from communication devices, from peripherals interacting with the physical world, or from user input devices. In most cases, this data is likely to arrive in short bursts, or even a few bytes at a time, in formats that can be easily parsed. There are times, however, when your application may need to handle large volumes of streaming input data, and moving that data to temporary storage for later processing may not be an adequate solution.

You may need, for example, to pick out specific values in a rapid stream of data from a remote telemetry device, as it is coming in. If you send the data stream to an AWS Lambda application designed to quickly pull and process the required information, you can handle the necessary real-time tasks without slowing down your main application.

Limitations of AWS Lambda

As we have described so far, AWS Lambda can and should be one of your main go-to resources for handling repetitive or time-consuming tasks, along with the other heavy-lifting jobs of the data-processing world. It frees your main online services to focus on high-priority frontend tasks, such as responding rapidly to user requests, and it allows you to offload many processes that would otherwise slow down your system.

However, AWS Lambda is not a silver bullet for every use case. For example, it should not be used for anything that you need to control or manage at the infrastructure level, nor should it be used for a large monolithic application or suite of applications.

Lambda comes with a number of “limitations”, which is good to keep in mind when architecting a solution.

There are some “hard limitations” for the runtime environment: the disk space is limited to 500MB, memory can vary from 128MB to 3GB and the execution timeout for a function is 15 minutes. Package constraints like the size of deployment package (250MB) and the number of file descriptors (1024) are also defined as hard limits.

Similarly, there are “limitations” for the requests served by Lambda: request and response body synchronous event payload can be a maximum of 6 MB while an asynchronous invocation payload can be up to 256KB. At the moment, the only soft “limitation”, which you can request to be increased, is the number of concurrent executions, which is a safety feature to prevent any accidental recursive or infinite loops go wild in the code. This would throttle the number of parallel executions.

Are These True Limitations?

You may be wondering why I keep quoting the word “limitation” - because these should not be viewed as limitations, but as well defined architectural principles for the Lambda service:

- If your Lambda function is running for hours, it should be moved to Elastic Beanstalk or EC2 rather than Lambda.

- If the deployment package jar is greater than 50 MB in size, it should be broken down to multiple packages and functions.

- If the requests payloads exceed the limits, you should break them up into multiple request endpoints.

It all comes down to preventing deploying monolithic applications as Lambda functions, and designing stateless microservices as a collection of functions instead. Having this mindset, the “limitations” make complete sense.

We've seen the use cases. But which companies are using AWS Lambda? Lets explore three examples.

Who's Using AWS Lambda?

1. Netflix

Netflix has long been the poster child for AWS. It’s not surprising they were one of the first to put Lambda to use. Netflix handles petabytes of video and other data types that need to be streamed to its 50 million customers globally. Their AWS stack is made up of up to 50,000 instances in 12 zones, and 50% of those instances turn over daily, with almost all of them changing each month. To support this scale of operations, the company needs to push the boundaries in the way it builds and manages its infrastructure.

The approach they take is to abstract away the underlying infrastructure as much as possible, so they can focus on their applications instead. Previously, AWS APIs enabled them to control infrastructure programmatically, and fairly efficiently. However, with Lambda, they’ve discovered a new level of abstraction whereby, instead of polling the infrastructure in order to manage and control it, they can use declarative rules-based triggers to make infrastructure automatically adapt to changes in the application layer.

For example, Netflix uses Lambda to execute rule-based checks and routing to handle data stored in S3. Whenever a piece of data is written to S3, a Lambda function decides which data needs to be backed up, which needs to be stored offsite. It then validates if the data has reached its destination, or if an alarm needs to be triggered.

Similarly, with security, Netflix uses Lambda functions to check if new instances created by various services are secure. If vulnerabilities and wrong configurations are spotted, the instance is shut down automatically.

Another way Netflix uses Lambda to enforce better security is with its open source SSH authorization tool BLESS. Bless runs as a Lambda function and integrates with AWS KMS to verify and sign public SSH keys. This is easier than setting up new infrastructure for SSH validation, as the infrastructure is fully managed by AWS. It’s also safer as the keys are active only briefly, and compromised keys are useful for just a short while. Other organisations like Lyft, too, are using BLESS to secure their systems with two-factor authentication.

2. Localytics

The mobile app analytics startup, Localytics, is also making good use of Lambda in their operations. They are big on ChatOps, as it not only quickens operations, but also breaks down silos between teams, thus facilitating the DevOps model. Localytics uses Lambda along with Slack to enable its teams to manage infrastructure more easily.

Localytics leverages the Serverless framework to manage flow of code across the development pipeline. They’ve open-sourced a node.js package to create slackbot Lambda functions easily. Once configured, these Lambda functions can take action on commands like ‘/bot ping’ or ‘/bot echo’. Since their infrastructure is predominantly AWS-centric, Lambda allows them to easily leverage other AWS services and accomplish complex cross-service tasks that would otherwise take a lot of effort. Thus, Lambda helps Localytics simplify its infrastructure management using ChatOps, with better integration across other AWS services.

3. REA Group

For our final example of AWS Lambda in action, we turn to Australia’s leading real estate website, REA Group. They built a recommendation engine for their website, and used Lambda to power this essential feature. This feature involved a million JSON files being processed daily by Lambda. Because of the large volume of data, each Lambda job could run for more than five minutes. So, they decided to break down the data into smaller chunks and then process it using Lambda.

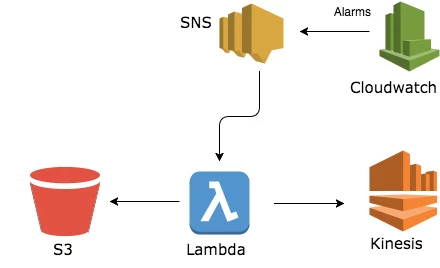

REA Group used a collection of different AWS services like SNS, DynamoDB, Kinesis, and CloudWatch to run the entire operation.

REA uses Cronitor and PagerDuty for monitoring, and CloudWatch Logs for logging.

AWS Lambda Opens Possibilities

As these examples make clear, AWS Lambda is powering unique use cases in enterprises across the world. From running core cloud platforms to extending legacy applications, and even enabling modern features like recommendation engines—Lambda is versatile and flexible. As you look to expand your product’s capabilities, or completely overhaul the way it performs tasks, do consider AWS Lambda. It’s a lot easier to manage than regular cloud instances, and opens up a world of possibilities in the form of AWS’ various services.

To learn more, check out our whitepaper: Introduction to Serverless Computing with AWS Lambda.

![8 Massive Cloud Migration Challenges [2020]](https://cdn.sanity.io/images/hgftikht/production/cd9d4836dbb1eb475a9a24d9c587959ab5957ab7-1000x666.jpg?w=630&h=427&fit=crop)